- Blog

- Immoral ward illusion wiki

- Numetal band covering the offspring gone away

- Stats modeling the world 3rd edition

- I cannot make changes to the world in the msts route editor

- Orange is the new black season 5 episode 13 analysis

- Shape builder tool adobe illustrator cs5

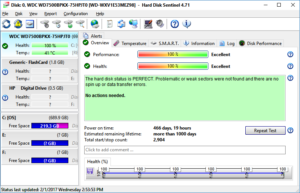

- Spinrite 6 ecc corrected

- Female cartoon characters naked anime dragon ball z

- Winchester m14 co2 air rifle repair manual

- Pc zombie survival games

- The kite game

- Start go fund me

So If I had 10 drives, I would start the array on five or six, holding some for later so that the entire array isn't the same age. If/when I build a new array I want drives of different ages. Are all of your drives the same age? Are all the drives of the same model/manufacturer? Such things increase the risk ofone failure evolving into a multi-drive failure during rebuild. If you are running commercial-scale arrays of dozen of drives, arrays that can handle multiple failures at once, then the sky is probably the limit.īut the story gets more complicated. If you have two parity drives in the array you can probably risk the 8/10/12 TB size. If you are running a small NAS with only one parity drive, you don't want drives that take more than a day to onboard. That depends on the size of your drives and the number in the array. > What's the current recommendations around raid capacity before you have to seriously start worrying about drive failure before it can be rebuilt? So one wonders if you could solve for device capacity where 'reconstruction takes 3 years' (the depreciated life span of a drive at Google). It already takes a long time to reconstruct any "wide" vDev in ZFS that is zraid2.

The ZFS folks have also considered this and designed both dual and TRIPLE parity which makes sense for these larger drives! That was the genesis of doing dual parity (which Netapp called 'diagonal parity' because of the design). And of course reconstructing a RAID-5 group for a failed drive you need to read the other drives, for larger groups of disks (which people did to maximize data storage) you started becoming at risk of being able to reconstruct all of the stripes. (something like 1 in 20 attempts would fail and you'd have data loss). And we had determined that by 4TB it would be "stupid" to using mirroring for protection since if a drive failed you couldn't count on being able to re-silver the mirror by getting a clean read of the other half. When I was at NetApp, the company would get reports of UREs that were fixed by RAID reconstruction (so you read a disk, you get an error and you fix it by the ECC in the other drives). HDD manufacturers do all sorts of things to avoid them (re-trys, track mirroring, etc) but ultimately it is a mechanical system and there are a LOT of bits. What it interesting about this spec is that it is statistical, meaning that the more times you read the bits, the more likely you are to hit a URE. Typically this spec is like 1 in 10^14 bits. All of which, statistically, can create a non-recoverable bit error.

#SPINRITE 6 ECC CORRECTED FULL#

All of these drives do track at a time with full ECC to facilitate reading in the presence of noise, but a track write can have effects on adjacent tracks and temperature can affect head flying height, and vibration can affect head tracking, etc. The more interesting data point is that the "URE" spec.

- Blog

- Immoral ward illusion wiki

- Numetal band covering the offspring gone away

- Stats modeling the world 3rd edition

- I cannot make changes to the world in the msts route editor

- Orange is the new black season 5 episode 13 analysis

- Shape builder tool adobe illustrator cs5

- Spinrite 6 ecc corrected

- Female cartoon characters naked anime dragon ball z

- Winchester m14 co2 air rifle repair manual

- Pc zombie survival games

- The kite game

- Start go fund me